Playing chess with a graph neural network

I recently uploaded a repository to Github called chess-gnn that provides basic machinery for training a graph neural network to play chess. The code is available here: github.com/jordanshivers/chess-gnn.

You can perform supervised training using human games from Lichess, optionally refine with self-play reinforcement learning, and estimate strength with calibrated games against Stockfish. You can also directly play against a trained model in a web interface.

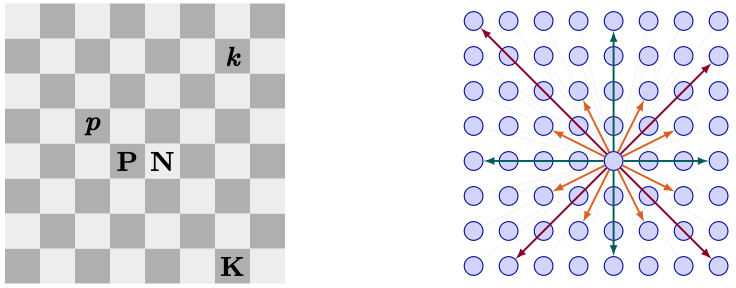

Each square is a node on a fully-connected directed graph (64 nodes; edges connect every ordered pair of distinct squares). Node features record what piece (if any) sits on the square, the square’s location, and a global state (e.g., side to move) broadcast to every node. Edge features encode simple geometric information about the offset between squares, so the model does not have to rediscover basic chess geometry from raw coordinates alone.

The GNN uses a stack of PyTorch Geometric TransformerConv layers with edge conditioning. The readout is a policy head that assigns a score to each of the 4096 directed (from, to) pairs, masked to legal moves. In the movie above, the arrows represent the model’s top-ranked moves with opacity indicating the score. Here, the model was trained on a few million Lichess games restricted to players with Elo ratings above 1800.